SelenaCore Update: Dynamic Prompt Architecture and LLM Integration Refinement

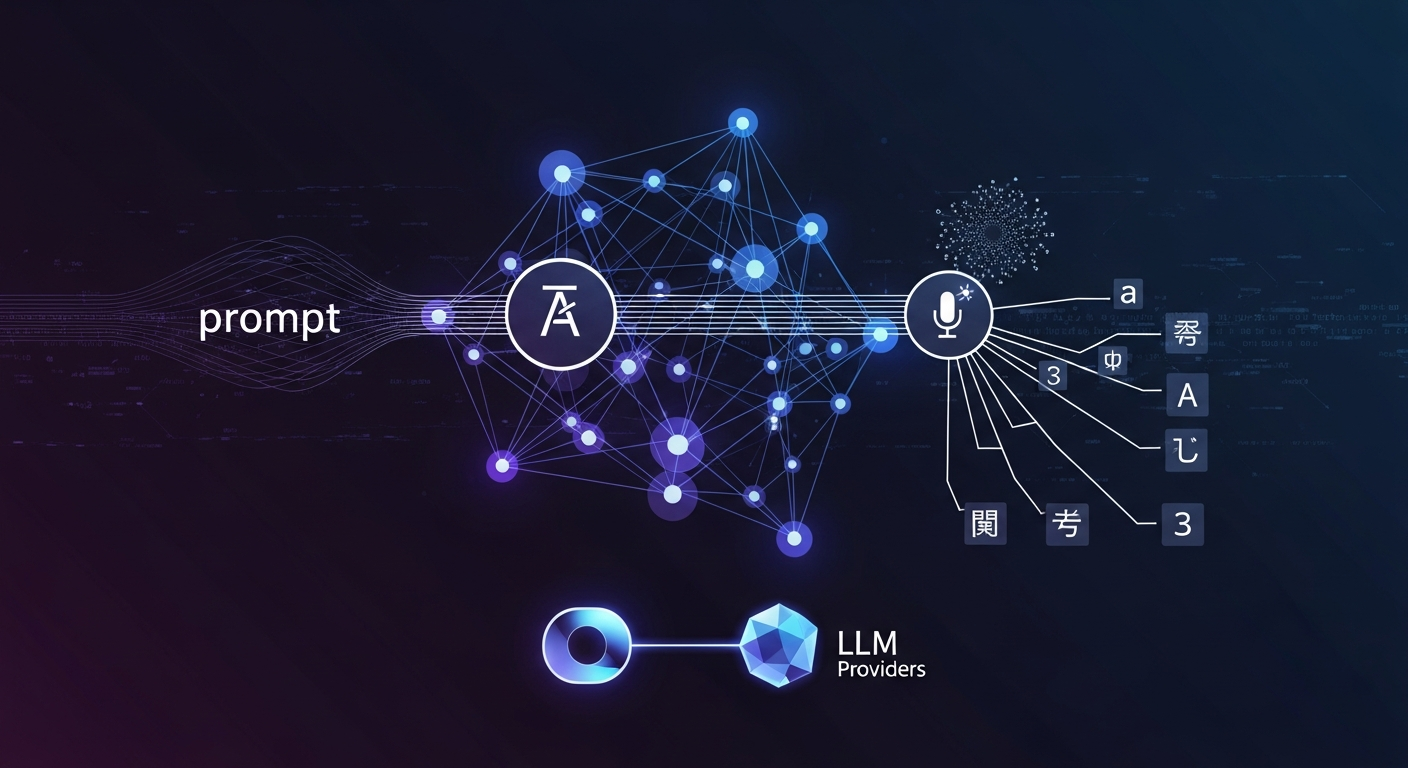

In our latest update to SelenaCore, we have significantly enhanced the way the system handles Large Language Model (LLM) interactions and prompt engineering. The highlight of this release is the introduction of a dynamic prompt system d77d25f, which supports internationalization (i18n), split fields for hidden and user instructions, and strict character limits to ensure model stability.

Accompanying this backend change, the frontend has received a redesign of the voice settings prompt editor 014c0e6, providing more granular control over how the AI perceives and responds to user input. Language settings are now persisted directly to core.yaml 06e4502, ensuring that prompt rebuilding happens automatically upon a language switch.

We have also refined our LLM provider integrations. The Ollama module has been transitioned to the /api/chat endpoint, and specific fixes were applied to the Google Gemini systemInstruction handling 3e12d56. To streamline the intent routing process, we now utilize a centralized build_system_prompt utility 53b108e, eliminating hardcoded strings across the codebase.

Finally, as part of our ongoing optimization, we have removed openwakeword from the requirements 49b1a63, fully migrating our wake-word detection capabilities to the Vosk engine for improved performance in local environments.